添加pm,git和依赖

This commit is contained in:

parent

615163a7f6

commit

940fb66fe9

|

|

@ -18,6 +18,13 @@

|

||||||

"program": "src/hook.pyw",

|

"program": "src/hook.pyw",

|

||||||

"console": "integratedTerminal"

|

"console": "integratedTerminal"

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

"name": "Python 调试程序: 当前文件",

|

||||||

|

"type": "debugpy",

|

||||||

|

"request": "launch",

|

||||||

|

"program": "${file}",

|

||||||

|

"console": "integratedTerminal"

|

||||||

|

},

|

||||||

{

|

{

|

||||||

"command": "make debug",

|

"command": "make debug",

|

||||||

"name": "打包并在虚拟机中运行",

|

"name": "打包并在虚拟机中运行",

|

||||||

|

|

|

||||||

Binary file not shown.

|

|

@ -1 +0,0 @@

|

||||||

echo helloworld

|

|

||||||

|

|

@ -1 +0,0 @@

|

||||||

nihaoshijie

|

|

||||||

|

|

@ -1,55 +0,0 @@

|

||||||

{

|

|

||||||

"version": "0.0.1",

|

|

||||||

"name": "cli-tools",

|

|

||||||

"author": "cxykevin",

|

|

||||||

"introduce": "PEinjector/命令行工具",

|

|

||||||

"compatibility": {

|

|

||||||

"injector": {

|

|

||||||

"min": "0.0.1",

|

|

||||||

"max": "0.0.1"

|

|

||||||

}

|

|

||||||

},

|

|

||||||

"load": {

|

|

||||||

"symlink": true,

|

|

||||||

"mode": {

|

|

||||||

"onboot": [

|

|

||||||

{

|

|

||||||

"type": "copy",

|

|

||||||

"from": "cli",

|

|

||||||

"to": "X:/Windows/system32"

|

|

||||||

}

|

|

||||||

],

|

|

||||||

"onload": [

|

|

||||||

{

|

|

||||||

"type": "start",

|

|

||||||

"command": "cmd"

|

|

||||||

}

|

|

||||||

]

|

|

||||||

}

|

|

||||||

},

|

|

||||||

"start": {

|

|

||||||

"icon": {

|

|

||||||

"name": "CMD",

|

|

||||||

"command": "cmd",

|

|

||||||

"icon": "X:/Windows/system32/cmd.exe"

|

|

||||||

},

|

|

||||||

"path": [

|

|

||||||

"X:/Windows/system32/cli"

|

|

||||||

]

|

|

||||||

},

|

|

||||||

"reg": [

|

|

||||||

"1.reg"

|

|

||||||

],

|

|

||||||

"keywords": [

|

|

||||||

"cli",

|

|

||||||

"PEinjector"

|

|

||||||

],

|

|

||||||

"dependence": [],

|

|

||||||

"datas": [

|

|

||||||

{

|

|

||||||

"type": "dir",

|

|

||||||

"from": "cli/test.txt",

|

|

||||||

"to": "data1"

|

|

||||||

}

|

|

||||||

]

|

|

||||||

}

|

|

||||||

|

|

@ -0,0 +1,17 @@

|

||||||

|

{

|

||||||

|

"version": "0.0.1",

|

||||||

|

"name": "git",

|

||||||

|

"author": "cxykevin,undefined",

|

||||||

|

"introduce": "Git 版本管理工具",

|

||||||

|

"keywords": [

|

||||||

|

"git",

|

||||||

|

"develop",

|

||||||

|

"PEinjector"

|

||||||

|

],

|

||||||

|

"dependence": [],

|

||||||

|

"start": {

|

||||||

|

"path": [

|

||||||

|

"Git/bin"

|

||||||

|

]

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

@ -0,0 +1,31 @@

|

||||||

|

{

|

||||||

|

"version": "0.0.1",

|

||||||

|

"name": "pmapi",

|

||||||

|

"author": "PEinjector",

|

||||||

|

"introduce": "PEinjector/包管理api",

|

||||||

|

"compatibility": {

|

||||||

|

"injector": {

|

||||||

|

"min": "0.0.1"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

"load": {

|

||||||

|

"symlink": true,

|

||||||

|

"mode": {

|

||||||

|

"onload": [

|

||||||

|

{

|

||||||

|

"type": "copy",

|

||||||

|

"from": "pm_api",

|

||||||

|

"to": "X:/PEinjector/pmapi"

|

||||||

|

}

|

||||||

|

]

|

||||||

|

}

|

||||||

|

},

|

||||||

|

"keywords": [

|

||||||

|

"pm",

|

||||||

|

"pmapi",

|

||||||

|

"PEinjector"

|

||||||

|

],

|

||||||

|

"dependence": [

|

||||||

|

"git"

|

||||||

|

]

|

||||||

|

}

|

||||||

|

|

@ -0,0 +1,4 @@

|

||||||

|

import load_packages # 自动运行

|

||||||

|

import log

|

||||||

|

load_packages.do_nothing()

|

||||||

|

log.info("--- log start ---")

|

||||||

|

|

@ -0,0 +1,3 @@

|

||||||

|

LOGLEVEL = "debug"

|

||||||

|

OUTPUT_COLORFUL = True

|

||||||

|

OUTPUT_STD = True

|

||||||

|

|

@ -0,0 +1 @@

|

||||||

|

import requests

|

||||||

|

|

@ -0,0 +1,13 @@

|

||||||

|

import sys

|

||||||

|

import os

|

||||||

|

|

||||||

|

|

||||||

|

def load_path(): # 加载第三方包到导入目录

|

||||||

|

sys.path.append(os.path.dirname(__file__)+os.sep+"tool")

|

||||||

|

|

||||||

|

|

||||||

|

def do_nothing(): # 过检测

|

||||||

|

pass

|

||||||

|

|

||||||

|

|

||||||

|

load_path()

|

||||||

|

|

@ -0,0 +1,55 @@

|

||||||

|

# ------ cxykevin log moudle ------

|

||||||

|

# This moudle is not a part of this project.

|

||||||

|

# This file is CopyNone.

|

||||||

|

|

||||||

|

|

||||||

|

import logging

|

||||||

|

import config

|

||||||

|

import sys

|

||||||

|

import os

|

||||||

|

levels = {

|

||||||

|

"debug": logging.DEBUG,

|

||||||

|

'info': logging.INFO,

|

||||||

|

'warning': logging.WARN,

|

||||||

|

'err': logging.ERROR

|

||||||

|

}

|

||||||

|

|

||||||

|

|

||||||

|

logging.basicConfig(filename=os.path.dirname(__file__)+os.sep+"log.log",

|

||||||

|

format='[%(asctime)s][%(levelname)s] %(message)s',

|

||||||

|

level=levels[config.LOGLEVEL], filemode="a")

|

||||||

|

|

||||||

|

|

||||||

|

def info(msg: str) -> None:

|

||||||

|

if (20 >= levels[config.LOGLEVEL] and config.OUTPUT_STD):

|

||||||

|

sys.stdout.write(

|

||||||

|

("[\033[34mINFO\033[0m]" if config.OUTPUT_COLORFUL else "[INFO]") + msg + "\n")

|

||||||

|

logging.info(msg)

|

||||||

|

|

||||||

|

|

||||||

|

def warn(msg: str) -> None:

|

||||||

|

if (30 >= levels[config.LOGLEVEL] and config.OUTPUT_STD):

|

||||||

|

sys.stdout.write(

|

||||||

|

("[\033[33mWARNING\033[0m]" if config.OUTPUT_COLORFUL else "[WARNING]") + msg + "\n")

|

||||||

|

logging.warn(msg)

|

||||||

|

|

||||||

|

|

||||||

|

def err(msg: str) -> None:

|

||||||

|

if (40 >= levels[config.LOGLEVEL] and config.OUTPUT_STD):

|

||||||

|

sys.stdout.write(

|

||||||

|

("[\033[31mERROR\033[0m]" if config.OUTPUT_COLORFUL else "[ERROR]") + msg + "\n")

|

||||||

|

logging.error(msg)

|

||||||

|

|

||||||

|

|

||||||

|

def break_err(msg: str) -> None:

|

||||||

|

if (40 >= levels[config.LOGLEVEL] and config.OUTPUT_STD):

|

||||||

|

sys.stdout.write(logging

|

||||||

|

("[\033[31mERROR\033[0m]" if config.OUTPUT_COLORFUL else "[ERROR]") + msg + "\n")

|

||||||

|

logging.error(msg)

|

||||||

|

|

||||||

|

|

||||||

|

def print(*args, end="\n") -> None:

|

||||||

|

msg = ' '.join(map(str, args))

|

||||||

|

if (10 >= levels[config.LOGLEVEL]):

|

||||||

|

sys.stdout.write(msg + end)

|

||||||

|

logging.debug(msg)

|

||||||

|

|

@ -0,0 +1 @@

|

||||||

|

pip

|

||||||

|

|

@ -0,0 +1,20 @@

|

||||||

|

This package contains a modified version of ca-bundle.crt:

|

||||||

|

|

||||||

|

ca-bundle.crt -- Bundle of CA Root Certificates

|

||||||

|

|

||||||

|

This is a bundle of X.509 certificates of public Certificate Authorities

|

||||||

|

(CA). These were automatically extracted from Mozilla's root certificates

|

||||||

|

file (certdata.txt). This file can be found in the mozilla source tree:

|

||||||

|

https://hg.mozilla.org/mozilla-central/file/tip/security/nss/lib/ckfw/builtins/certdata.txt

|

||||||

|

It contains the certificates in PEM format and therefore

|

||||||

|

can be directly used with curl / libcurl / php_curl, or with

|

||||||

|

an Apache+mod_ssl webserver for SSL client authentication.

|

||||||

|

Just configure this file as the SSLCACertificateFile.#

|

||||||

|

|

||||||

|

***** BEGIN LICENSE BLOCK *****

|

||||||

|

This Source Code Form is subject to the terms of the Mozilla Public License,

|

||||||

|

v. 2.0. If a copy of the MPL was not distributed with this file, You can obtain

|

||||||

|

one at http://mozilla.org/MPL/2.0/.

|

||||||

|

|

||||||

|

***** END LICENSE BLOCK *****

|

||||||

|

@(#) $RCSfile: certdata.txt,v $ $Revision: 1.80 $ $Date: 2011/11/03 15:11:58 $

|

||||||

|

|

@ -0,0 +1,66 @@

|

||||||

|

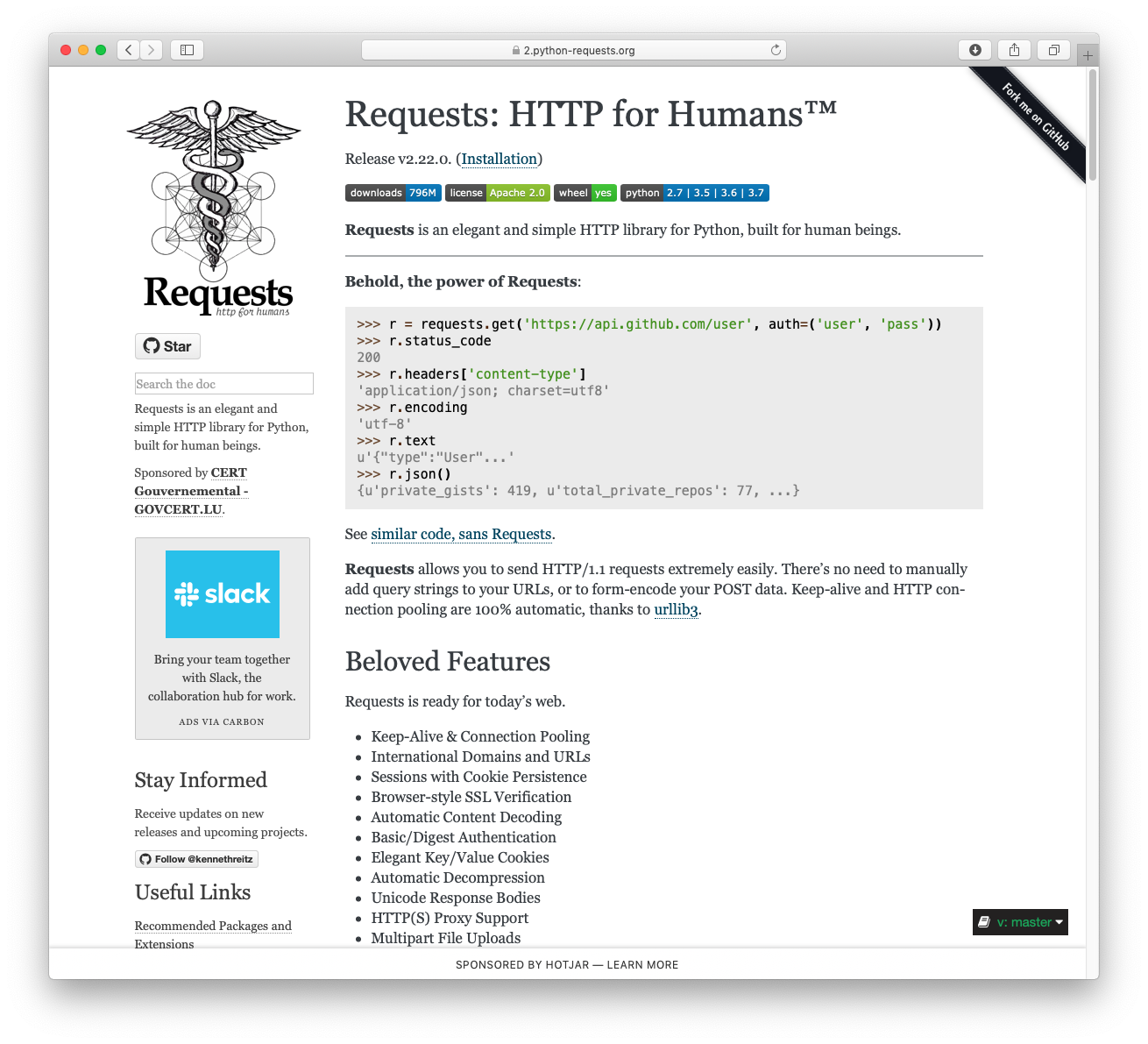

Metadata-Version: 2.1

|

||||||

|

Name: certifi

|

||||||

|

Version: 2024.2.2

|

||||||

|

Summary: Python package for providing Mozilla's CA Bundle.

|

||||||

|

Home-page: https://github.com/certifi/python-certifi

|

||||||

|

Author: Kenneth Reitz

|

||||||

|

Author-email: me@kennethreitz.com

|

||||||

|

License: MPL-2.0

|

||||||

|

Project-URL: Source, https://github.com/certifi/python-certifi

|

||||||

|

Classifier: Development Status :: 5 - Production/Stable

|

||||||

|

Classifier: Intended Audience :: Developers

|

||||||

|

Classifier: License :: OSI Approved :: Mozilla Public License 2.0 (MPL 2.0)

|

||||||

|

Classifier: Natural Language :: English

|

||||||

|

Classifier: Programming Language :: Python

|

||||||

|

Classifier: Programming Language :: Python :: 3

|

||||||

|

Classifier: Programming Language :: Python :: 3 :: Only

|

||||||

|

Classifier: Programming Language :: Python :: 3.6

|

||||||

|

Classifier: Programming Language :: Python :: 3.7

|

||||||

|

Classifier: Programming Language :: Python :: 3.8

|

||||||

|

Classifier: Programming Language :: Python :: 3.9

|

||||||

|

Classifier: Programming Language :: Python :: 3.10

|

||||||

|

Classifier: Programming Language :: Python :: 3.11

|

||||||

|

Requires-Python: >=3.6

|

||||||

|

License-File: LICENSE

|

||||||

|

|

||||||

|

Certifi: Python SSL Certificates

|

||||||

|

================================

|

||||||

|

|

||||||

|

Certifi provides Mozilla's carefully curated collection of Root Certificates for

|

||||||

|

validating the trustworthiness of SSL certificates while verifying the identity

|

||||||

|

of TLS hosts. It has been extracted from the `Requests`_ project.

|

||||||

|

|

||||||

|

Installation

|

||||||

|

------------

|

||||||

|

|

||||||

|

``certifi`` is available on PyPI. Simply install it with ``pip``::

|

||||||

|

|

||||||

|

$ pip install certifi

|

||||||

|

|

||||||

|

Usage

|

||||||

|

-----

|

||||||

|

|

||||||

|

To reference the installed certificate authority (CA) bundle, you can use the

|

||||||

|

built-in function::

|

||||||

|

|

||||||

|

>>> import certifi

|

||||||

|

|

||||||

|

>>> certifi.where()

|

||||||

|

'/usr/local/lib/python3.7/site-packages/certifi/cacert.pem'

|

||||||

|

|

||||||

|

Or from the command line::

|

||||||

|

|

||||||

|

$ python -m certifi

|

||||||

|

/usr/local/lib/python3.7/site-packages/certifi/cacert.pem

|

||||||

|

|

||||||

|

Enjoy!

|

||||||

|

|

||||||

|

.. _`Requests`: https://requests.readthedocs.io/en/master/

|

||||||

|

|

||||||

|

Addition/Removal of Certificates

|

||||||

|

--------------------------------

|

||||||

|

|

||||||

|

Certifi does not support any addition/removal or other modification of the

|

||||||

|

CA trust store content. This project is intended to provide a reliable and

|

||||||

|

highly portable root of trust to python deployments. Look to upstream projects

|

||||||

|

for methods to use alternate trust.

|

||||||

|

|

@ -0,0 +1,14 @@

|

||||||

|

certifi-2024.2.2.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

|

||||||

|

certifi-2024.2.2.dist-info/LICENSE,sha256=6TcW2mucDVpKHfYP5pWzcPBpVgPSH2-D8FPkLPwQyvc,989

|

||||||

|

certifi-2024.2.2.dist-info/METADATA,sha256=1noreLRChpOgeSj0uJT1mehiBl8ngh33Guc7KdvzYYM,2170

|

||||||

|

certifi-2024.2.2.dist-info/RECORD,,

|

||||||

|

certifi-2024.2.2.dist-info/WHEEL,sha256=oiQVh_5PnQM0E3gPdiz09WCNmwiHDMaGer_elqB3coM,92

|

||||||

|

certifi-2024.2.2.dist-info/top_level.txt,sha256=KMu4vUCfsjLrkPbSNdgdekS-pVJzBAJFO__nI8NF6-U,8

|

||||||

|

certifi/__init__.py,sha256=ljtEx-EmmPpTe2SOd5Kzsujm_lUD0fKJVnE9gzce320,94

|

||||||

|

certifi/__main__.py,sha256=xBBoj905TUWBLRGANOcf7oi6e-3dMP4cEoG9OyMs11g,243

|

||||||

|

certifi/__pycache__/__init__.cpython-312.pyc,,

|

||||||

|

certifi/__pycache__/__main__.cpython-312.pyc,,

|

||||||

|

certifi/__pycache__/core.cpython-312.pyc,,

|

||||||

|

certifi/cacert.pem,sha256=ejR8qP724p-CtuR4U1WmY1wX-nVeCUD2XxWqj8e9f5I,292541

|

||||||

|

certifi/core.py,sha256=qRDDFyXVJwTB_EmoGppaXU_R9qCZvhl-EzxPMuV3nTA,4426

|

||||||

|

certifi/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

||||||

|

|

@ -0,0 +1,5 @@

|

||||||

|

Wheel-Version: 1.0

|

||||||

|

Generator: bdist_wheel (0.42.0)

|

||||||

|

Root-Is-Purelib: true

|

||||||

|

Tag: py3-none-any

|

||||||

|

|

||||||

|

|

@ -0,0 +1 @@

|

||||||

|

certifi

|

||||||

|

|

@ -0,0 +1,4 @@

|

||||||

|

from .core import contents, where

|

||||||

|

|

||||||

|

__all__ = ["contents", "where"]

|

||||||

|

__version__ = "2024.02.02"

|

||||||

|

|

@ -0,0 +1,12 @@

|

||||||

|

import argparse

|

||||||

|

|

||||||

|

from certifi import contents, where

|

||||||

|

|

||||||

|

parser = argparse.ArgumentParser()

|

||||||

|

parser.add_argument("-c", "--contents", action="store_true")

|

||||||

|

args = parser.parse_args()

|

||||||

|

|

||||||

|

if args.contents:

|

||||||

|

print(contents())

|

||||||

|

else:

|

||||||

|

print(where())

|

||||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,114 @@

|

||||||

|

"""

|

||||||

|

certifi.py

|

||||||

|

~~~~~~~~~~

|

||||||

|

|

||||||

|

This module returns the installation location of cacert.pem or its contents.

|

||||||

|

"""

|

||||||

|

import sys

|

||||||

|

import atexit

|

||||||

|

|

||||||

|

def exit_cacert_ctx() -> None:

|

||||||

|

_CACERT_CTX.__exit__(None, None, None) # type: ignore[union-attr]

|

||||||

|

|

||||||

|

|

||||||

|

if sys.version_info >= (3, 11):

|

||||||

|

|

||||||

|

from importlib.resources import as_file, files

|

||||||

|

|

||||||

|

_CACERT_CTX = None

|

||||||

|

_CACERT_PATH = None

|

||||||

|

|

||||||

|

def where() -> str:

|

||||||

|

# This is slightly terrible, but we want to delay extracting the file

|

||||||

|

# in cases where we're inside of a zipimport situation until someone

|

||||||

|

# actually calls where(), but we don't want to re-extract the file

|

||||||

|

# on every call of where(), so we'll do it once then store it in a

|

||||||

|

# global variable.

|

||||||

|

global _CACERT_CTX

|

||||||

|

global _CACERT_PATH

|

||||||

|

if _CACERT_PATH is None:

|

||||||

|

# This is slightly janky, the importlib.resources API wants you to

|

||||||

|

# manage the cleanup of this file, so it doesn't actually return a

|

||||||

|

# path, it returns a context manager that will give you the path

|

||||||

|

# when you enter it and will do any cleanup when you leave it. In

|

||||||

|

# the common case of not needing a temporary file, it will just

|

||||||

|

# return the file system location and the __exit__() is a no-op.

|

||||||

|

#

|

||||||

|

# We also have to hold onto the actual context manager, because

|

||||||

|

# it will do the cleanup whenever it gets garbage collected, so

|

||||||

|

# we will also store that at the global level as well.

|

||||||

|

_CACERT_CTX = as_file(files("certifi").joinpath("cacert.pem"))

|

||||||

|

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||||

|

atexit.register(exit_cacert_ctx)

|

||||||

|

|

||||||

|

return _CACERT_PATH

|

||||||

|

|

||||||

|

def contents() -> str:

|

||||||

|

return files("certifi").joinpath("cacert.pem").read_text(encoding="ascii")

|

||||||

|

|

||||||

|

elif sys.version_info >= (3, 7):

|

||||||

|

|

||||||

|

from importlib.resources import path as get_path, read_text

|

||||||

|

|

||||||

|

_CACERT_CTX = None

|

||||||

|

_CACERT_PATH = None

|

||||||

|

|

||||||

|

def where() -> str:

|

||||||

|

# This is slightly terrible, but we want to delay extracting the

|

||||||

|

# file in cases where we're inside of a zipimport situation until

|

||||||

|

# someone actually calls where(), but we don't want to re-extract

|

||||||

|

# the file on every call of where(), so we'll do it once then store

|

||||||

|

# it in a global variable.

|

||||||

|

global _CACERT_CTX

|

||||||

|

global _CACERT_PATH

|

||||||

|

if _CACERT_PATH is None:

|

||||||

|

# This is slightly janky, the importlib.resources API wants you

|

||||||

|

# to manage the cleanup of this file, so it doesn't actually

|

||||||

|

# return a path, it returns a context manager that will give

|

||||||

|

# you the path when you enter it and will do any cleanup when

|

||||||

|

# you leave it. In the common case of not needing a temporary

|

||||||

|

# file, it will just return the file system location and the

|

||||||

|

# __exit__() is a no-op.

|

||||||

|

#

|

||||||

|

# We also have to hold onto the actual context manager, because

|

||||||

|

# it will do the cleanup whenever it gets garbage collected, so

|

||||||

|

# we will also store that at the global level as well.

|

||||||

|

_CACERT_CTX = get_path("certifi", "cacert.pem")

|

||||||

|

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||||

|

atexit.register(exit_cacert_ctx)

|

||||||

|

|

||||||

|

return _CACERT_PATH

|

||||||

|

|

||||||

|

def contents() -> str:

|

||||||

|

return read_text("certifi", "cacert.pem", encoding="ascii")

|

||||||

|

|

||||||

|

else:

|

||||||

|

import os

|

||||||

|

import types

|

||||||

|

from typing import Union

|

||||||

|

|

||||||

|

Package = Union[types.ModuleType, str]

|

||||||

|

Resource = Union[str, "os.PathLike"]

|

||||||

|

|

||||||

|

# This fallback will work for Python versions prior to 3.7 that lack the

|

||||||

|

# importlib.resources module but relies on the existing `where` function

|

||||||

|

# so won't address issues with environments like PyOxidizer that don't set

|

||||||

|

# __file__ on modules.

|

||||||

|

def read_text(

|

||||||

|

package: Package,

|

||||||

|

resource: Resource,

|

||||||

|

encoding: str = 'utf-8',

|

||||||

|

errors: str = 'strict'

|

||||||

|

) -> str:

|

||||||

|

with open(where(), encoding=encoding) as data:

|

||||||

|

return data.read()

|

||||||

|

|

||||||

|

# If we don't have importlib.resources, then we will just do the old logic

|

||||||

|

# of assuming we're on the filesystem and munge the path directly.

|

||||||

|

def where() -> str:

|

||||||

|

f = os.path.dirname(__file__)

|

||||||

|

|

||||||

|

return os.path.join(f, "cacert.pem")

|

||||||

|

|

||||||

|

def contents() -> str:

|

||||||

|

return read_text("certifi", "cacert.pem", encoding="ascii")

|

||||||

|

|

@ -0,0 +1 @@

|

||||||

|

pip

|

||||||

|

|

@ -0,0 +1,21 @@

|

||||||

|

MIT License

|

||||||

|

|

||||||

|

Copyright (c) 2019 TAHRI Ahmed R.

|

||||||

|

|

||||||

|

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||||

|

of this software and associated documentation files (the "Software"), to deal

|

||||||

|

in the Software without restriction, including without limitation the rights

|

||||||

|

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||||

|

copies of the Software, and to permit persons to whom the Software is

|

||||||

|

furnished to do so, subject to the following conditions:

|

||||||

|

|

||||||

|

The above copyright notice and this permission notice shall be included in all

|

||||||

|

copies or substantial portions of the Software.

|

||||||

|

|

||||||

|

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||||

|

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||||

|

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||||

|

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||||

|

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||||

|

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||||

|

SOFTWARE.

|

||||||

|

|

@ -0,0 +1,683 @@

|

||||||

|

Metadata-Version: 2.1

|

||||||

|

Name: charset-normalizer

|

||||||

|

Version: 3.3.2

|

||||||

|

Summary: The Real First Universal Charset Detector. Open, modern and actively maintained alternative to Chardet.

|

||||||

|

Home-page: https://github.com/Ousret/charset_normalizer

|

||||||

|

Author: Ahmed TAHRI

|

||||||

|

Author-email: ahmed.tahri@cloudnursery.dev

|

||||||

|

License: MIT

|

||||||

|

Project-URL: Bug Reports, https://github.com/Ousret/charset_normalizer/issues

|

||||||

|

Project-URL: Documentation, https://charset-normalizer.readthedocs.io/en/latest

|

||||||

|

Keywords: encoding,charset,charset-detector,detector,normalization,unicode,chardet,detect

|

||||||

|

Classifier: Development Status :: 5 - Production/Stable

|

||||||

|

Classifier: License :: OSI Approved :: MIT License

|

||||||

|

Classifier: Intended Audience :: Developers

|

||||||

|

Classifier: Topic :: Software Development :: Libraries :: Python Modules

|

||||||

|

Classifier: Operating System :: OS Independent

|

||||||

|

Classifier: Programming Language :: Python

|

||||||

|

Classifier: Programming Language :: Python :: 3

|

||||||

|

Classifier: Programming Language :: Python :: 3.7

|

||||||

|

Classifier: Programming Language :: Python :: 3.8

|

||||||

|

Classifier: Programming Language :: Python :: 3.9

|

||||||

|

Classifier: Programming Language :: Python :: 3.10

|

||||||

|

Classifier: Programming Language :: Python :: 3.11

|

||||||

|

Classifier: Programming Language :: Python :: 3.12

|

||||||

|

Classifier: Programming Language :: Python :: Implementation :: PyPy

|

||||||

|

Classifier: Topic :: Text Processing :: Linguistic

|

||||||

|

Classifier: Topic :: Utilities

|

||||||

|

Classifier: Typing :: Typed

|

||||||

|

Requires-Python: >=3.7.0

|

||||||

|

Description-Content-Type: text/markdown

|

||||||

|

License-File: LICENSE

|

||||||

|

Provides-Extra: unicode_backport

|

||||||

|

|

||||||

|

<h1 align="center">Charset Detection, for Everyone 👋</h1>

|

||||||

|

|

||||||

|

<p align="center">

|

||||||

|

<sup>The Real First Universal Charset Detector</sup><br>

|

||||||

|

<a href="https://pypi.org/project/charset-normalizer">

|

||||||

|

<img src="https://img.shields.io/pypi/pyversions/charset_normalizer.svg?orange=blue" />

|

||||||

|

</a>

|

||||||

|

<a href="https://pepy.tech/project/charset-normalizer/">

|

||||||

|

<img alt="Download Count Total" src="https://static.pepy.tech/badge/charset-normalizer/month" />

|

||||||

|

</a>

|

||||||

|

<a href="https://bestpractices.coreinfrastructure.org/projects/7297">

|

||||||

|

<img src="https://bestpractices.coreinfrastructure.org/projects/7297/badge">

|

||||||

|

</a>

|

||||||

|

</p>

|

||||||

|

<p align="center">

|

||||||

|

<sup><i>Featured Packages</i></sup><br>

|

||||||

|

<a href="https://github.com/jawah/niquests">

|

||||||

|

<img alt="Static Badge" src="https://img.shields.io/badge/Niquests-HTTP_1.1%2C%202%2C_and_3_Client-cyan">

|

||||||

|

</a>

|

||||||

|

<a href="https://github.com/jawah/wassima">

|

||||||

|

<img alt="Static Badge" src="https://img.shields.io/badge/Wassima-Certifi_Killer-cyan">

|

||||||

|

</a>

|

||||||

|

</p>

|

||||||

|

<p align="center">

|

||||||

|

<sup><i>In other language (unofficial port - by the community)</i></sup><br>

|

||||||

|

<a href="https://github.com/nickspring/charset-normalizer-rs">

|

||||||

|

<img alt="Static Badge" src="https://img.shields.io/badge/Rust-red">

|

||||||

|

</a>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

> A library that helps you read text from an unknown charset encoding.<br /> Motivated by `chardet`,

|

||||||

|

> I'm trying to resolve the issue by taking a new approach.

|

||||||

|

> All IANA character set names for which the Python core library provides codecs are supported.

|

||||||

|

|

||||||

|

<p align="center">

|

||||||

|

>>>>> <a href="https://charsetnormalizerweb.ousret.now.sh" target="_blank">👉 Try Me Online Now, Then Adopt Me 👈 </a> <<<<<

|

||||||

|

</p>

|

||||||

|

|

||||||

|

This project offers you an alternative to **Universal Charset Encoding Detector**, also known as **Chardet**.

|

||||||

|

|

||||||

|

| Feature | [Chardet](https://github.com/chardet/chardet) | Charset Normalizer | [cChardet](https://github.com/PyYoshi/cChardet) |

|

||||||

|

|--------------------------------------------------|:---------------------------------------------:|:--------------------------------------------------------------------------------------------------:|:-----------------------------------------------:|

|

||||||

|

| `Fast` | ❌ | ✅ | ✅ |

|

||||||

|

| `Universal**` | ❌ | ✅ | ❌ |

|

||||||

|

| `Reliable` **without** distinguishable standards | ❌ | ✅ | ✅ |

|

||||||

|

| `Reliable` **with** distinguishable standards | ✅ | ✅ | ✅ |

|

||||||

|

| `License` | LGPL-2.1<br>_restrictive_ | MIT | MPL-1.1<br>_restrictive_ |

|

||||||

|

| `Native Python` | ✅ | ✅ | ❌ |

|

||||||

|

| `Detect spoken language` | ❌ | ✅ | N/A |

|

||||||

|

| `UnicodeDecodeError Safety` | ❌ | ✅ | ❌ |

|

||||||

|

| `Whl Size (min)` | 193.6 kB | 42 kB | ~200 kB |

|

||||||

|

| `Supported Encoding` | 33 | 🎉 [99](https://charset-normalizer.readthedocs.io/en/latest/user/support.html#supported-encodings) | 40 |

|

||||||

|

|

||||||

|

<p align="center">

|

||||||

|

<img src="https://i.imgflip.com/373iay.gif" alt="Reading Normalized Text" width="226"/><img src="https://media.tenor.com/images/c0180f70732a18b4965448d33adba3d0/tenor.gif" alt="Cat Reading Text" width="200"/>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

*\*\* : They are clearly using specific code for a specific encoding even if covering most of used one*<br>

|

||||||

|

Did you got there because of the logs? See [https://charset-normalizer.readthedocs.io/en/latest/user/miscellaneous.html](https://charset-normalizer.readthedocs.io/en/latest/user/miscellaneous.html)

|

||||||

|

|

||||||

|

## ⚡ Performance

|

||||||

|

|

||||||

|

This package offer better performance than its counterpart Chardet. Here are some numbers.

|

||||||

|

|

||||||

|

| Package | Accuracy | Mean per file (ms) | File per sec (est) |

|

||||||

|

|-----------------------------------------------|:--------:|:------------------:|:------------------:|

|

||||||

|

| [chardet](https://github.com/chardet/chardet) | 86 % | 200 ms | 5 file/sec |

|

||||||

|

| charset-normalizer | **98 %** | **10 ms** | 100 file/sec |

|

||||||

|

|

||||||

|

| Package | 99th percentile | 95th percentile | 50th percentile |

|

||||||

|

|-----------------------------------------------|:---------------:|:---------------:|:---------------:|

|

||||||

|

| [chardet](https://github.com/chardet/chardet) | 1200 ms | 287 ms | 23 ms |

|

||||||

|

| charset-normalizer | 100 ms | 50 ms | 5 ms |

|

||||||

|

|

||||||

|

Chardet's performance on larger file (1MB+) are very poor. Expect huge difference on large payload.

|

||||||

|

|

||||||

|

> Stats are generated using 400+ files using default parameters. More details on used files, see GHA workflows.

|

||||||

|

> And yes, these results might change at any time. The dataset can be updated to include more files.

|

||||||

|

> The actual delays heavily depends on your CPU capabilities. The factors should remain the same.

|

||||||

|

> Keep in mind that the stats are generous and that Chardet accuracy vs our is measured using Chardet initial capability

|

||||||

|

> (eg. Supported Encoding) Challenge-them if you want.

|

||||||

|

|

||||||

|

## ✨ Installation

|

||||||

|

|

||||||

|

Using pip:

|

||||||

|

|

||||||

|

```sh

|

||||||

|

pip install charset-normalizer -U

|

||||||

|

```

|

||||||

|

|

||||||

|

## 🚀 Basic Usage

|

||||||

|

|

||||||

|

### CLI

|

||||||

|

This package comes with a CLI.

|

||||||

|

|

||||||

|

```

|

||||||

|

usage: normalizer [-h] [-v] [-a] [-n] [-m] [-r] [-f] [-t THRESHOLD]

|

||||||

|

file [file ...]

|

||||||

|

|

||||||

|

The Real First Universal Charset Detector. Discover originating encoding used

|

||||||

|

on text file. Normalize text to unicode.

|

||||||

|

|

||||||

|

positional arguments:

|

||||||

|

files File(s) to be analysed

|

||||||

|

|

||||||

|

optional arguments:

|

||||||

|

-h, --help show this help message and exit

|

||||||

|

-v, --verbose Display complementary information about file if any.

|

||||||

|

Stdout will contain logs about the detection process.

|

||||||

|

-a, --with-alternative

|

||||||

|

Output complementary possibilities if any. Top-level

|

||||||

|

JSON WILL be a list.

|

||||||

|

-n, --normalize Permit to normalize input file. If not set, program

|

||||||

|

does not write anything.

|

||||||

|

-m, --minimal Only output the charset detected to STDOUT. Disabling

|

||||||

|

JSON output.

|

||||||

|

-r, --replace Replace file when trying to normalize it instead of

|

||||||

|

creating a new one.

|

||||||

|

-f, --force Replace file without asking if you are sure, use this

|

||||||

|

flag with caution.

|

||||||

|

-t THRESHOLD, --threshold THRESHOLD

|

||||||

|

Define a custom maximum amount of chaos allowed in

|

||||||

|

decoded content. 0. <= chaos <= 1.

|

||||||

|

--version Show version information and exit.

|

||||||

|

```

|

||||||

|

|

||||||

|

```bash

|

||||||

|

normalizer ./data/sample.1.fr.srt

|

||||||

|

```

|

||||||

|

|

||||||

|

or

|

||||||

|

|

||||||

|

```bash

|

||||||

|

python -m charset_normalizer ./data/sample.1.fr.srt

|

||||||

|

```

|

||||||

|

|

||||||

|

🎉 Since version 1.4.0 the CLI produce easily usable stdout result in JSON format.

|

||||||

|

|

||||||

|

```json

|

||||||

|

{

|

||||||

|

"path": "/home/default/projects/charset_normalizer/data/sample.1.fr.srt",

|

||||||

|

"encoding": "cp1252",

|

||||||

|

"encoding_aliases": [

|

||||||

|

"1252",

|

||||||

|

"windows_1252"

|

||||||

|

],

|

||||||

|

"alternative_encodings": [

|

||||||

|

"cp1254",

|

||||||

|

"cp1256",

|

||||||

|

"cp1258",

|

||||||

|

"iso8859_14",

|

||||||

|

"iso8859_15",

|

||||||

|

"iso8859_16",

|

||||||

|

"iso8859_3",

|

||||||

|

"iso8859_9",

|

||||||

|

"latin_1",

|

||||||

|

"mbcs"

|

||||||

|

],

|

||||||

|

"language": "French",

|

||||||

|

"alphabets": [

|

||||||

|

"Basic Latin",

|

||||||

|

"Latin-1 Supplement"

|

||||||

|

],

|

||||||

|

"has_sig_or_bom": false,

|

||||||

|

"chaos": 0.149,

|

||||||

|

"coherence": 97.152,

|

||||||

|

"unicode_path": null,

|

||||||

|

"is_preferred": true

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

### Python

|

||||||

|

*Just print out normalized text*

|

||||||

|

```python

|

||||||

|

from charset_normalizer import from_path

|

||||||

|

|

||||||

|

results = from_path('./my_subtitle.srt')

|

||||||

|

|

||||||

|

print(str(results.best()))

|

||||||

|

```

|

||||||

|

|

||||||

|

*Upgrade your code without effort*

|

||||||

|

```python

|

||||||

|

from charset_normalizer import detect

|

||||||

|

```

|

||||||

|

|

||||||

|

The above code will behave the same as **chardet**. We ensure that we offer the best (reasonable) BC result possible.

|

||||||

|

|

||||||

|

See the docs for advanced usage : [readthedocs.io](https://charset-normalizer.readthedocs.io/en/latest/)

|

||||||

|

|

||||||

|

## 😇 Why

|

||||||

|

|

||||||

|

When I started using Chardet, I noticed that it was not suited to my expectations, and I wanted to propose a

|

||||||

|

reliable alternative using a completely different method. Also! I never back down on a good challenge!

|

||||||

|

|

||||||

|

I **don't care** about the **originating charset** encoding, because **two different tables** can

|

||||||

|

produce **two identical rendered string.**

|

||||||

|

What I want is to get readable text, the best I can.

|

||||||

|

|

||||||

|

In a way, **I'm brute forcing text decoding.** How cool is that ? 😎

|

||||||

|

|

||||||

|

Don't confuse package **ftfy** with charset-normalizer or chardet. ftfy goal is to repair unicode string whereas charset-normalizer to convert raw file in unknown encoding to unicode.

|

||||||

|

|

||||||

|

## 🍰 How

|

||||||

|

|

||||||

|

- Discard all charset encoding table that could not fit the binary content.

|

||||||

|

- Measure noise, or the mess once opened (by chunks) with a corresponding charset encoding.

|

||||||

|

- Extract matches with the lowest mess detected.

|

||||||

|

- Additionally, we measure coherence / probe for a language.

|

||||||

|

|

||||||

|

**Wait a minute**, what is noise/mess and coherence according to **YOU ?**

|

||||||

|

|

||||||

|

*Noise :* I opened hundred of text files, **written by humans**, with the wrong encoding table. **I observed**, then

|

||||||

|

**I established** some ground rules about **what is obvious** when **it seems like** a mess.

|

||||||

|

I know that my interpretation of what is noise is probably incomplete, feel free to contribute in order to

|

||||||

|

improve or rewrite it.

|

||||||

|

|

||||||

|

*Coherence :* For each language there is on earth, we have computed ranked letter appearance occurrences (the best we can). So I thought

|

||||||

|

that intel is worth something here. So I use those records against decoded text to check if I can detect intelligent design.

|

||||||

|

|

||||||

|

## ⚡ Known limitations

|

||||||

|

|

||||||

|

- Language detection is unreliable when text contains two or more languages sharing identical letters. (eg. HTML (english tags) + Turkish content (Sharing Latin characters))

|

||||||

|

- Every charset detector heavily depends on sufficient content. In common cases, do not bother run detection on very tiny content.

|

||||||

|

|

||||||

|

## ⚠️ About Python EOLs

|

||||||

|

|

||||||

|

**If you are running:**

|

||||||

|

|

||||||

|

- Python >=2.7,<3.5: Unsupported

|

||||||

|

- Python 3.5: charset-normalizer < 2.1

|

||||||

|

- Python 3.6: charset-normalizer < 3.1

|

||||||

|

- Python 3.7: charset-normalizer < 4.0

|

||||||

|

|

||||||

|

Upgrade your Python interpreter as soon as possible.

|

||||||

|

|

||||||

|

## 👤 Contributing

|

||||||

|

|

||||||

|

Contributions, issues and feature requests are very much welcome.<br />

|

||||||

|

Feel free to check [issues page](https://github.com/ousret/charset_normalizer/issues) if you want to contribute.

|

||||||

|

|

||||||

|

## 📝 License

|

||||||

|

|

||||||

|

Copyright © [Ahmed TAHRI @Ousret](https://github.com/Ousret).<br />

|

||||||

|

This project is [MIT](https://github.com/Ousret/charset_normalizer/blob/master/LICENSE) licensed.

|

||||||

|

|

||||||

|

Characters frequencies used in this project © 2012 [Denny Vrandečić](http://simia.net/letters/)

|

||||||

|

|

||||||

|

## 💼 For Enterprise

|

||||||

|

|

||||||

|

Professional support for charset-normalizer is available as part of the [Tidelift

|

||||||

|

Subscription][1]. Tidelift gives software development teams a single source for

|

||||||

|

purchasing and maintaining their software, with professional grade assurances

|

||||||

|

from the experts who know it best, while seamlessly integrating with existing

|

||||||

|

tools.

|

||||||

|

|

||||||

|

[1]: https://tidelift.com/subscription/pkg/pypi-charset-normalizer?utm_source=pypi-charset-normalizer&utm_medium=readme

|

||||||

|

|

||||||

|

# Changelog

|

||||||

|

All notable changes to charset-normalizer will be documented in this file. This project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0.html).

|

||||||

|

The format is based on [Keep a Changelog](https://keepachangelog.com/en/1.0.0/).

|

||||||

|

|

||||||

|

## [3.3.2](https://github.com/Ousret/charset_normalizer/compare/3.3.1...3.3.2) (2023-10-31)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Unintentional memory usage regression when using large payload that match several encoding (#376)

|

||||||

|

- Regression on some detection case showcased in the documentation (#371)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Noise (md) probe that identify malformed arabic representation due to the presence of letters in isolated form (credit to my wife)

|

||||||

|

|

||||||

|

## [3.3.1](https://github.com/Ousret/charset_normalizer/compare/3.3.0...3.3.1) (2023-10-22)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Optional mypyc compilation upgraded to version 1.6.1 for Python >= 3.8

|

||||||

|

- Improved the general detection reliability based on reports from the community

|

||||||

|

|

||||||

|

## [3.3.0](https://github.com/Ousret/charset_normalizer/compare/3.2.0...3.3.0) (2023-09-30)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Allow to execute the CLI (e.g. normalizer) through `python -m charset_normalizer.cli` or `python -m charset_normalizer`

|

||||||

|

- Support for 9 forgotten encoding that are supported by Python but unlisted in `encoding.aliases` as they have no alias (#323)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- (internal) Redundant utils.is_ascii function and unused function is_private_use_only

|

||||||

|

- (internal) charset_normalizer.assets is moved inside charset_normalizer.constant

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- (internal) Unicode code blocks in constants are updated using the latest v15.0.0 definition to improve detection

|

||||||

|

- Optional mypyc compilation upgraded to version 1.5.1 for Python >= 3.8

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Unable to properly sort CharsetMatch when both chaos/noise and coherence were close due to an unreachable condition in \_\_lt\_\_ (#350)

|

||||||

|

|

||||||

|

## [3.2.0](https://github.com/Ousret/charset_normalizer/compare/3.1.0...3.2.0) (2023-06-07)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Typehint for function `from_path` no longer enforce `PathLike` as its first argument

|

||||||

|

- Minor improvement over the global detection reliability

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Introduce function `is_binary` that relies on main capabilities, and optimized to detect binaries

|

||||||

|

- Propagate `enable_fallback` argument throughout `from_bytes`, `from_path`, and `from_fp` that allow a deeper control over the detection (default True)

|

||||||

|

- Explicit support for Python 3.12

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Edge case detection failure where a file would contain 'very-long' camel cased word (Issue #289)

|

||||||

|

|

||||||

|

## [3.1.0](https://github.com/Ousret/charset_normalizer/compare/3.0.1...3.1.0) (2023-03-06)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Argument `should_rename_legacy` for legacy function `detect` and disregard any new arguments without errors (PR #262)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Support for Python 3.6 (PR #260)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Optional speedup provided by mypy/c 1.0.1

|

||||||

|

|

||||||

|

## [3.0.1](https://github.com/Ousret/charset_normalizer/compare/3.0.0...3.0.1) (2022-11-18)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Multi-bytes cutter/chunk generator did not always cut correctly (PR #233)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Speedup provided by mypy/c 0.990 on Python >= 3.7

|

||||||

|

|

||||||

|

## [3.0.0](https://github.com/Ousret/charset_normalizer/compare/2.1.1...3.0.0) (2022-10-20)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Extend the capability of explain=True when cp_isolation contains at most two entries (min one), will log in details of the Mess-detector results

|

||||||

|

- Support for alternative language frequency set in charset_normalizer.assets.FREQUENCIES

|

||||||

|

- Add parameter `language_threshold` in `from_bytes`, `from_path` and `from_fp` to adjust the minimum expected coherence ratio

|

||||||

|

- `normalizer --version` now specify if current version provide extra speedup (meaning mypyc compilation whl)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Build with static metadata using 'build' frontend

|

||||||

|

- Make the language detection stricter

|

||||||

|

- Optional: Module `md.py` can be compiled using Mypyc to provide an extra speedup up to 4x faster than v2.1

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- CLI with opt --normalize fail when using full path for files

|

||||||

|

- TooManyAccentuatedPlugin induce false positive on the mess detection when too few alpha character have been fed to it

|

||||||

|

- Sphinx warnings when generating the documentation

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Coherence detector no longer return 'Simple English' instead return 'English'

|

||||||

|

- Coherence detector no longer return 'Classical Chinese' instead return 'Chinese'

|

||||||

|

- Breaking: Method `first()` and `best()` from CharsetMatch

|

||||||

|

- UTF-7 will no longer appear as "detected" without a recognized SIG/mark (is unreliable/conflict with ASCII)

|

||||||

|

- Breaking: Class aliases CharsetDetector, CharsetDoctor, CharsetNormalizerMatch and CharsetNormalizerMatches

|

||||||

|

- Breaking: Top-level function `normalize`

|

||||||

|

- Breaking: Properties `chaos_secondary_pass`, `coherence_non_latin` and `w_counter` from CharsetMatch

|

||||||

|

- Support for the backport `unicodedata2`

|

||||||

|

|

||||||

|

## [3.0.0rc1](https://github.com/Ousret/charset_normalizer/compare/3.0.0b2...3.0.0rc1) (2022-10-18)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Extend the capability of explain=True when cp_isolation contains at most two entries (min one), will log in details of the Mess-detector results

|

||||||

|

- Support for alternative language frequency set in charset_normalizer.assets.FREQUENCIES

|

||||||

|

- Add parameter `language_threshold` in `from_bytes`, `from_path` and `from_fp` to adjust the minimum expected coherence ratio

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Build with static metadata using 'build' frontend

|

||||||

|

- Make the language detection stricter

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- CLI with opt --normalize fail when using full path for files

|

||||||

|

- TooManyAccentuatedPlugin induce false positive on the mess detection when too few alpha character have been fed to it

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Coherence detector no longer return 'Simple English' instead return 'English'

|

||||||

|

- Coherence detector no longer return 'Classical Chinese' instead return 'Chinese'

|

||||||

|

|

||||||

|

## [3.0.0b2](https://github.com/Ousret/charset_normalizer/compare/3.0.0b1...3.0.0b2) (2022-08-21)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- `normalizer --version` now specify if current version provide extra speedup (meaning mypyc compilation whl)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Breaking: Method `first()` and `best()` from CharsetMatch

|

||||||

|

- UTF-7 will no longer appear as "detected" without a recognized SIG/mark (is unreliable/conflict with ASCII)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Sphinx warnings when generating the documentation

|

||||||

|

|

||||||

|

## [3.0.0b1](https://github.com/Ousret/charset_normalizer/compare/2.1.0...3.0.0b1) (2022-08-15)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Optional: Module `md.py` can be compiled using Mypyc to provide an extra speedup up to 4x faster than v2.1

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Breaking: Class aliases CharsetDetector, CharsetDoctor, CharsetNormalizerMatch and CharsetNormalizerMatches

|

||||||

|

- Breaking: Top-level function `normalize`

|

||||||

|

- Breaking: Properties `chaos_secondary_pass`, `coherence_non_latin` and `w_counter` from CharsetMatch

|

||||||

|

- Support for the backport `unicodedata2`

|

||||||

|

|

||||||

|

## [2.1.1](https://github.com/Ousret/charset_normalizer/compare/2.1.0...2.1.1) (2022-08-19)

|

||||||

|

|

||||||

|

### Deprecated

|

||||||

|

- Function `normalize` scheduled for removal in 3.0

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Removed useless call to decode in fn is_unprintable (#206)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Third-party library (i18n xgettext) crashing not recognizing utf_8 (PEP 263) with underscore from [@aleksandernovikov](https://github.com/aleksandernovikov) (#204)

|

||||||

|

|

||||||

|

## [2.1.0](https://github.com/Ousret/charset_normalizer/compare/2.0.12...2.1.0) (2022-06-19)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Output the Unicode table version when running the CLI with `--version` (PR #194)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Re-use decoded buffer for single byte character sets from [@nijel](https://github.com/nijel) (PR #175)

|

||||||

|

- Fixing some performance bottlenecks from [@deedy5](https://github.com/deedy5) (PR #183)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Workaround potential bug in cpython with Zero Width No-Break Space located in Arabic Presentation Forms-B, Unicode 1.1 not acknowledged as space (PR #175)

|

||||||

|

- CLI default threshold aligned with the API threshold from [@oleksandr-kuzmenko](https://github.com/oleksandr-kuzmenko) (PR #181)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Support for Python 3.5 (PR #192)

|

||||||

|

|

||||||

|

### Deprecated

|

||||||

|

- Use of backport unicodedata from `unicodedata2` as Python is quickly catching up, scheduled for removal in 3.0 (PR #194)

|

||||||

|

|

||||||

|

## [2.0.12](https://github.com/Ousret/charset_normalizer/compare/2.0.11...2.0.12) (2022-02-12)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- ASCII miss-detection on rare cases (PR #170)

|

||||||

|

|

||||||

|

## [2.0.11](https://github.com/Ousret/charset_normalizer/compare/2.0.10...2.0.11) (2022-01-30)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Explicit support for Python 3.11 (PR #164)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- The logging behavior have been completely reviewed, now using only TRACE and DEBUG levels (PR #163 #165)

|

||||||

|

|

||||||

|

## [2.0.10](https://github.com/Ousret/charset_normalizer/compare/2.0.9...2.0.10) (2022-01-04)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Fallback match entries might lead to UnicodeDecodeError for large bytes sequence (PR #154)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Skipping the language-detection (CD) on ASCII (PR #155)

|

||||||

|

|

||||||

|

## [2.0.9](https://github.com/Ousret/charset_normalizer/compare/2.0.8...2.0.9) (2021-12-03)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Moderating the logging impact (since 2.0.8) for specific environments (PR #147)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Wrong logging level applied when setting kwarg `explain` to True (PR #146)

|

||||||

|

|

||||||

|

## [2.0.8](https://github.com/Ousret/charset_normalizer/compare/2.0.7...2.0.8) (2021-11-24)

|

||||||

|

### Changed

|

||||||

|

- Improvement over Vietnamese detection (PR #126)

|

||||||

|

- MD improvement on trailing data and long foreign (non-pure latin) data (PR #124)

|

||||||

|

- Efficiency improvements in cd/alphabet_languages from [@adbar](https://github.com/adbar) (PR #122)

|

||||||

|

- call sum() without an intermediary list following PEP 289 recommendations from [@adbar](https://github.com/adbar) (PR #129)

|

||||||

|

- Code style as refactored by Sourcery-AI (PR #131)

|

||||||

|

- Minor adjustment on the MD around european words (PR #133)

|

||||||

|

- Remove and replace SRTs from assets / tests (PR #139)

|

||||||

|

- Initialize the library logger with a `NullHandler` by default from [@nmaynes](https://github.com/nmaynes) (PR #135)

|

||||||

|

- Setting kwarg `explain` to True will add provisionally (bounded to function lifespan) a specific stream handler (PR #135)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Fix large (misleading) sequence giving UnicodeDecodeError (PR #137)

|

||||||

|

- Avoid using too insignificant chunk (PR #137)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- Add and expose function `set_logging_handler` to configure a specific StreamHandler from [@nmaynes](https://github.com/nmaynes) (PR #135)

|

||||||

|

- Add `CHANGELOG.md` entries, format is based on [Keep a Changelog](https://keepachangelog.com/en/1.0.0/) (PR #141)

|

||||||

|

|

||||||

|

## [2.0.7](https://github.com/Ousret/charset_normalizer/compare/2.0.6...2.0.7) (2021-10-11)

|

||||||

|

### Added

|

||||||

|

- Add support for Kazakh (Cyrillic) language detection (PR #109)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Further, improve inferring the language from a given single-byte code page (PR #112)

|

||||||

|

- Vainly trying to leverage PEP263 when PEP3120 is not supported (PR #116)

|

||||||

|

- Refactoring for potential performance improvements in loops from [@adbar](https://github.com/adbar) (PR #113)

|

||||||

|

- Various detection improvement (MD+CD) (PR #117)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- Remove redundant logging entry about detected language(s) (PR #115)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- Fix a minor inconsistency between Python 3.5 and other versions regarding language detection (PR #117 #102)

|

||||||

|

|

||||||

|

## [2.0.6](https://github.com/Ousret/charset_normalizer/compare/2.0.5...2.0.6) (2021-09-18)

|

||||||

|

### Fixed

|

||||||

|

- Unforeseen regression with the loss of the backward-compatibility with some older minor of Python 3.5.x (PR #100)

|

||||||

|

- Fix CLI crash when using --minimal output in certain cases (PR #103)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Minor improvement to the detection efficiency (less than 1%) (PR #106 #101)

|

||||||

|

|

||||||

|

## [2.0.5](https://github.com/Ousret/charset_normalizer/compare/2.0.4...2.0.5) (2021-09-14)

|

||||||

|

### Changed

|

||||||

|

- The project now comply with: flake8, mypy, isort and black to ensure a better overall quality (PR #81)

|

||||||

|

- The BC-support with v1.x was improved, the old staticmethods are restored (PR #82)

|

||||||

|

- The Unicode detection is slightly improved (PR #93)

|

||||||

|

- Add syntax sugar \_\_bool\_\_ for results CharsetMatches list-container (PR #91)

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- The project no longer raise warning on tiny content given for detection, will be simply logged as warning instead (PR #92)

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

- In some rare case, the chunks extractor could cut in the middle of a multi-byte character and could mislead the mess detection (PR #95)

|

||||||

|

- Some rare 'space' characters could trip up the UnprintablePlugin/Mess detection (PR #96)

|

||||||

|

- The MANIFEST.in was not exhaustive (PR #78)

|

||||||

|

|

||||||

|

## [2.0.4](https://github.com/Ousret/charset_normalizer/compare/2.0.3...2.0.4) (2021-07-30)

|

||||||

|

### Fixed

|

||||||

|

- The CLI no longer raise an unexpected exception when no encoding has been found (PR #70)

|

||||||

|

- Fix accessing the 'alphabets' property when the payload contains surrogate characters (PR #68)

|

||||||

|

- The logger could mislead (explain=True) on detected languages and the impact of one MBCS match (PR #72)

|

||||||

|

- Submatch factoring could be wrong in rare edge cases (PR #72)

|

||||||

|

- Multiple files given to the CLI were ignored when publishing results to STDOUT. (After the first path) (PR #72)

|

||||||

|

- Fix line endings from CRLF to LF for certain project files (PR #67)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Adjust the MD to lower the sensitivity, thus improving the global detection reliability (PR #69 #76)

|

||||||

|

- Allow fallback on specified encoding if any (PR #71)

|

||||||

|

|

||||||

|

## [2.0.3](https://github.com/Ousret/charset_normalizer/compare/2.0.2...2.0.3) (2021-07-16)

|

||||||

|

### Changed

|

||||||

|

- Part of the detection mechanism has been improved to be less sensitive, resulting in more accurate detection results. Especially ASCII. (PR #63)

|

||||||

|

- According to the community wishes, the detection will fall back on ASCII or UTF-8 in a last-resort case. (PR #64)

|

||||||

|

|

||||||

|

## [2.0.2](https://github.com/Ousret/charset_normalizer/compare/2.0.1...2.0.2) (2021-07-15)

|

||||||

|

### Fixed

|

||||||

|

- Empty/Too small JSON payload miss-detection fixed. Report from [@tseaver](https://github.com/tseaver) (PR #59)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Don't inject unicodedata2 into sys.modules from [@akx](https://github.com/akx) (PR #57)

|

||||||

|

|

||||||

|

## [2.0.1](https://github.com/Ousret/charset_normalizer/compare/2.0.0...2.0.1) (2021-07-13)

|

||||||

|

### Fixed

|

||||||

|

- Make it work where there isn't a filesystem available, dropping assets frequencies.json. Report from [@sethmlarson](https://github.com/sethmlarson). (PR #55)

|

||||||

|

- Using explain=False permanently disable the verbose output in the current runtime (PR #47)

|

||||||

|

- One log entry (language target preemptive) was not show in logs when using explain=True (PR #47)

|

||||||

|

- Fix undesired exception (ValueError) on getitem of instance CharsetMatches (PR #52)

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

- Public function normalize default args values were not aligned with from_bytes (PR #53)

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- You may now use charset aliases in cp_isolation and cp_exclusion arguments (PR #47)

|

||||||

|

|

||||||

|

## [2.0.0](https://github.com/Ousret/charset_normalizer/compare/1.4.1...2.0.0) (2021-07-02)

|

||||||

|

### Changed

|

||||||

|

- 4x to 5 times faster than the previous 1.4.0 release. At least 2x faster than Chardet.

|

||||||

|

- Accent has been made on UTF-8 detection, should perform rather instantaneous.

|

||||||

|

- The backward compatibility with Chardet has been greatly improved. The legacy detect function returns an identical charset name whenever possible.

|

||||||

|

- The detection mechanism has been slightly improved, now Turkish content is detected correctly (most of the time)

|

||||||

|

- The program has been rewritten to ease the readability and maintainability. (+Using static typing)+

|

||||||

|

- utf_7 detection has been reinstated.

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

- This package no longer require anything when used with Python 3.5 (Dropped cached_property)

|

||||||

|

- Removed support for these languages: Catalan, Esperanto, Kazakh, Baque, Volapük, Azeri, Galician, Nynorsk, Macedonian, and Serbocroatian.

|

||||||

|

- The exception hook on UnicodeDecodeError has been removed.

|

||||||

|

|

||||||

|

### Deprecated

|

||||||

|

- Methods coherence_non_latin, w_counter, chaos_secondary_pass of the class CharsetMatch are now deprecated and scheduled for removal in v3.0

|

||||||

|

|

||||||

|

### Fixed

|

||||||